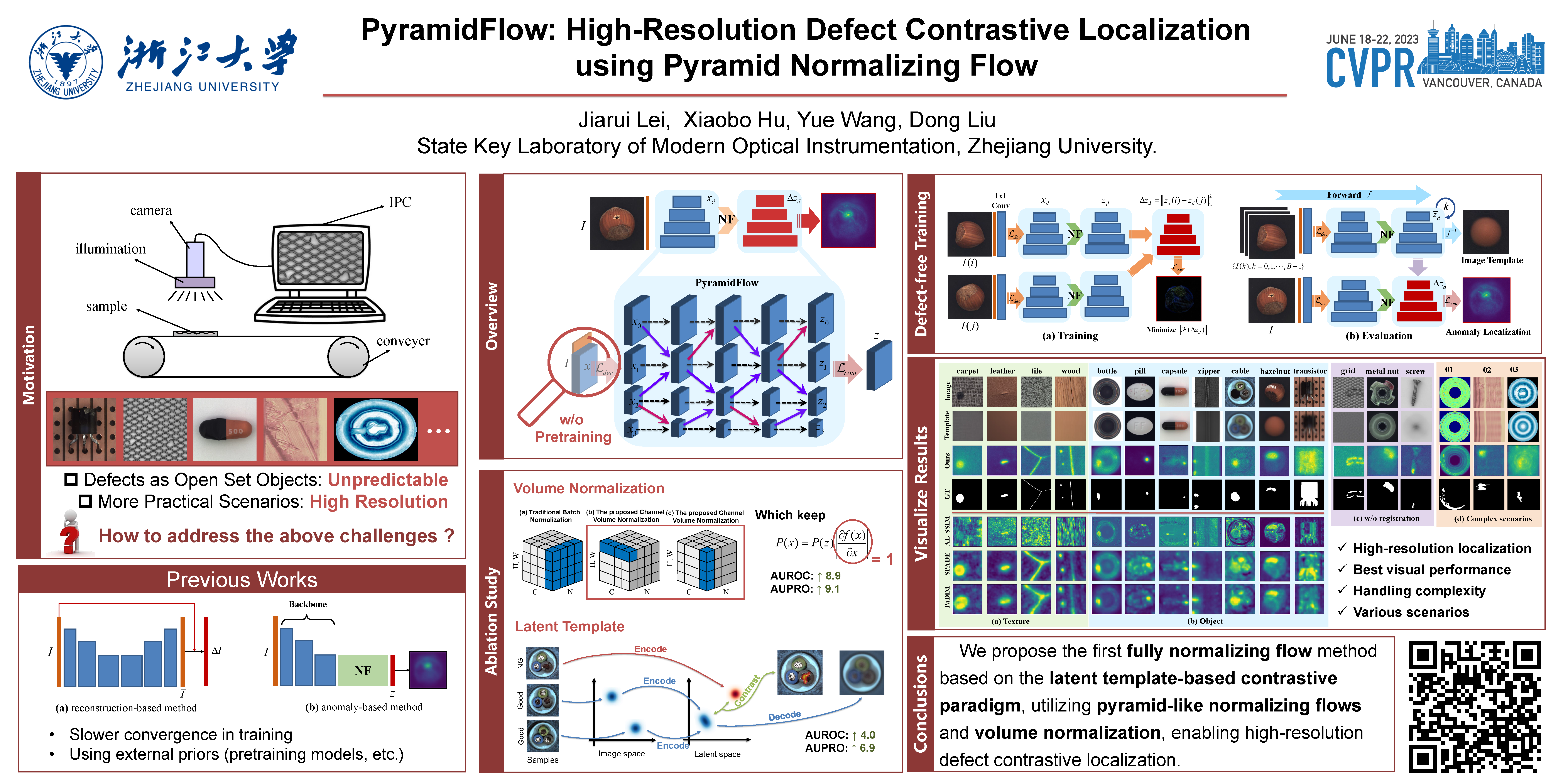

During industrial processing, unforeseen defects may arise in products due to uncontrollable factors. Although unsupervised methods have been successful in defect localization, the usual use of pre-trained models results in low-resolution outputs, which damages visual performance. To address this issue, we propose PyramidFlow, the first fully normalizing flow method without pre-trained models that enables high-resolution defect localization. Specifically, we propose a latent template-based defect contrastive localization paradigm to reduce intra-class variance, as the pre-trained models do. In addition, PyramidFlow utilizes pyramid-like normalizing flows for multi-scale fusing and volume normalization to help generalization. Our comprehensive studies on MVTecAD demonstrate the proposed method outperforms the comparable algorithms that do not use external priors, even achieving state-of-the-art performance in more challenging BTAD scenarios.

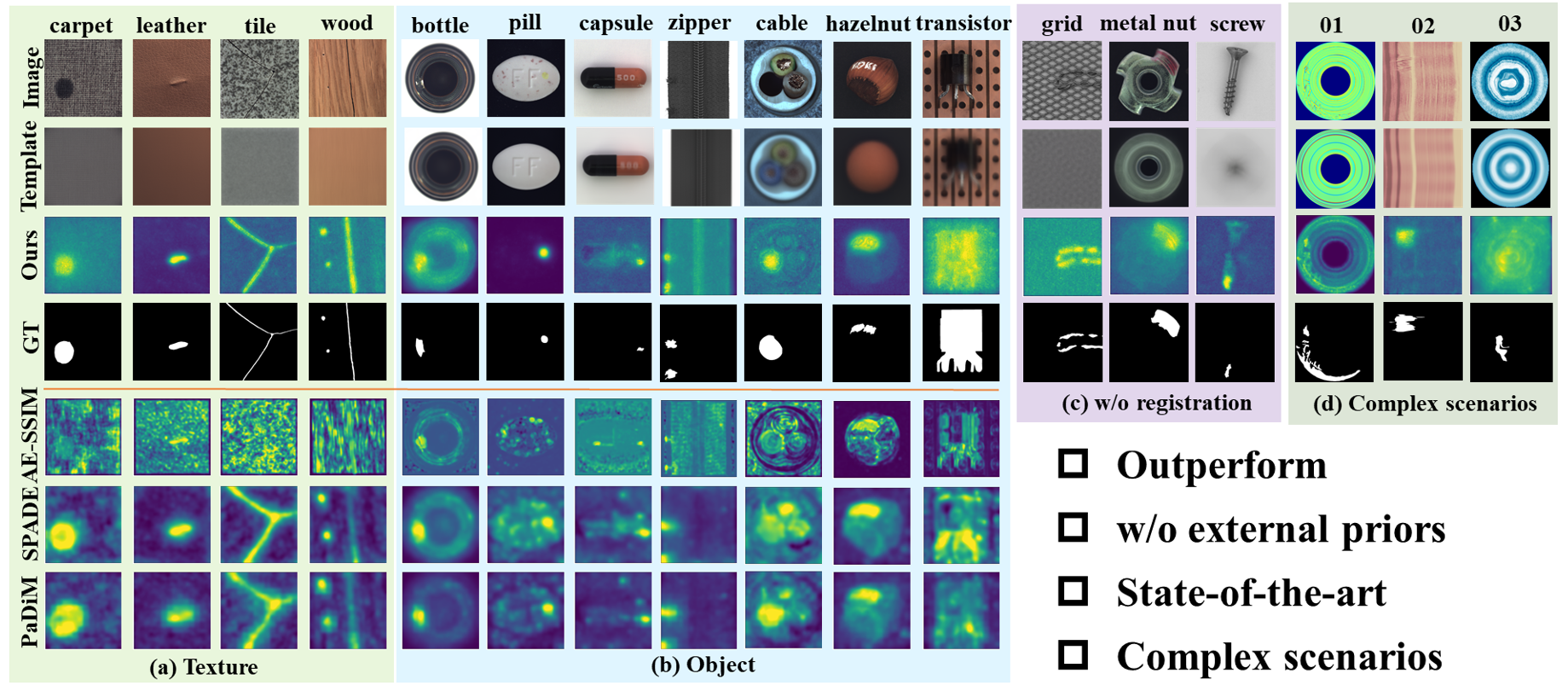

Visualization of our results on MVTecAD and BTAD. From top to bottom are original images, estimated image templates, our localization results, and ground truths. (a) The four texture results on MVTecAD. (b) Seven challenging object results on MVTecAD. (c) Results for the three unregistered categories on MVTecAD. (d) Results for complex scenarios on BTAD.

@InProceedings{Lei_2023_CVPR,

author = {Lei, Jiarui and Hu, Xiaobo and Wang, Yue and Liu, Dong},

title = {PyramidFlow: High-Resolution Defect Contrastive Localization Using Pyramid Normalizing Flow},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2023},

pages = {14143-14152}

}